There is plenty to get through in this post. There is some big news, so let’s get right to it!

AAX Release – aX Plugins in ProTools

A lot of people have asked for the aX Ambisonics Plugins to be available in AAX format so I’m very happy to release them. At this point they are as a public beta (you can download the demo versions to test on your system) but they have been stable for private beta testers.

Please note that the plugins run on the Ambisonic buses available in Pro Tools Ultimate (formerly HD). Unfortunately, you cannot use them with the standard version of Pro Tools at this point.

Also note that the seventh order a7 Plugins will run in Pro Tools but are limited to third order processing by Pro Tools bus structure. If you work exclusively with Pro Tools, and don’t need VST versions, then you can save money by picking up the a3 Plugins – you won’t benefit from the a7 versions.

Bundle Discounts

You can now buy the full set of 7 plugins in one click as a bundle for a discounted price of 30% less when compared to buying them all individually. You can get them from the online shop here.

Sale Pricing

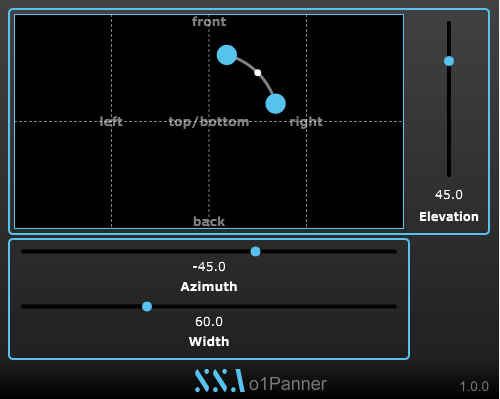

Until 31st July 2018 there will be at least a 20% discount on all plugin prices. The aXPanner and aXMonitor will continue with the 50% discount. Check out the shop for more details.

Academic Discount

If you plan on using the plugins for academic or educational purposes then the you can get in touch for a discount code for substantial extra discounts.

New Activation System

In order to provide offline activation, and perhaps a subscription payment option, the plugin activation system has been changed. If you are an existing customer you will need to enter the new activation number to the plugins after installing the new updates. This should be available in your account. I will also email out all of the updated serial numbers to ensure everyone gets them. If they are not listed in your account or you need the update before I have a chance to email you, please get in touch

Bug fixes

All formats of the aX Plugins have had a number of improvements to stability and performance under the hood. This won’t change how you use them but should improve the overall experience.

Aside from these performance enhancements, a bug was fixed in the macOS version of the aXMonitor that stopped custom HRTFs loading after they had been processed.

Coming Soon…

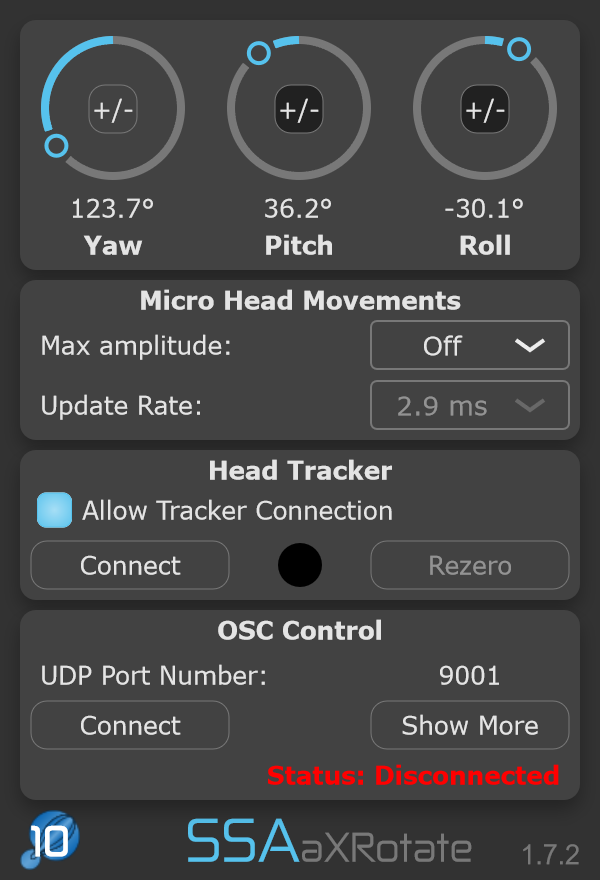

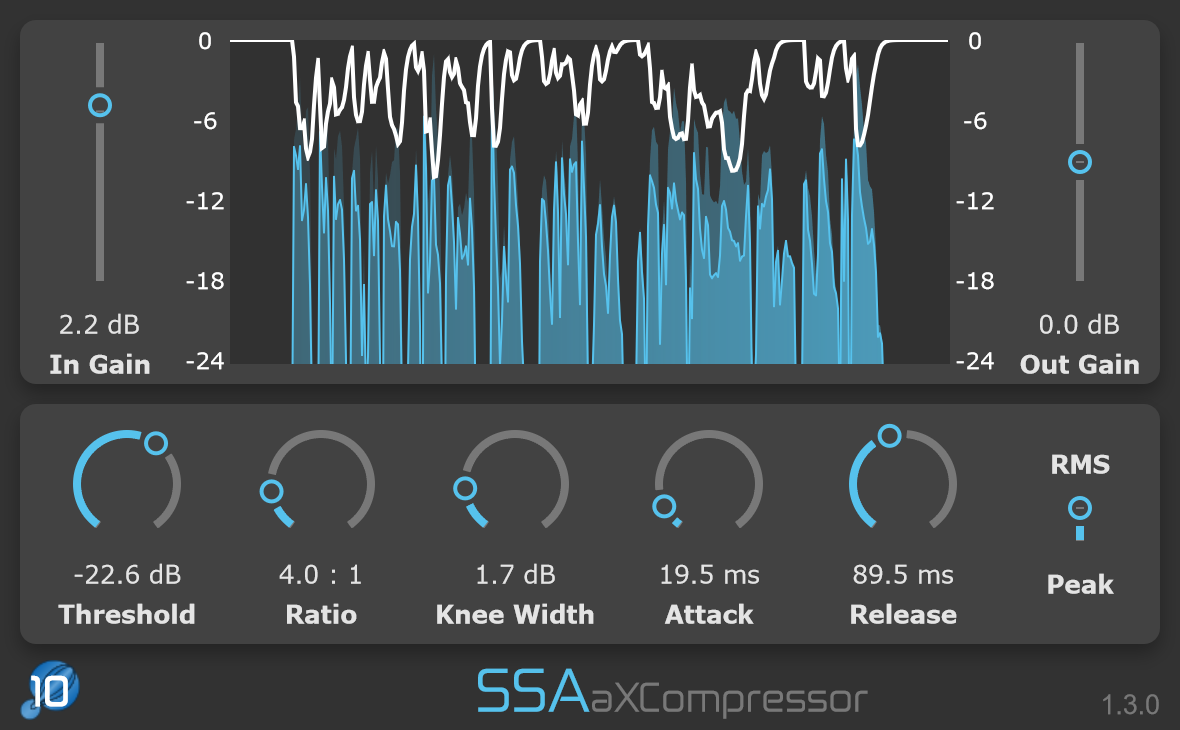

I am working on a few new plugins that will be out in the next few months. Some will be simple (and free), while another is shaping up to be something really interesting. Check back here to keep up to date!

In the meantime, if you want to support further development you can purchase the existing plugins at the web shop. Thank you for your support.